ASLR is Address Space Layout Randomization. And it is a very critical obstacle that has to be overcome. The simplest way to explain it requires us to go back to the very beginning days of hacking, from whence I came.

*Starting here, I have direct first hand experience in the hacking of OSes and Software.*

When programs used to load into memory, they were always loaded into a fixed memory space. That meant we always knew where to start looking at the machine code (commonly refereed to as Assembly Language) to see what the programmers had done. They sometimes would try to get tricky and "hide" the code by using one piece of code to load another piece. Sometimes even trying to hide it in video memory thinking "they will never look there". But simply put, because the very first piece of code always had to load at a fixed address, it meant we simply had to keep reading the code till we found out where they hid the next section.

Skip forward to the Windows days of the 90s. The problem still existed. While yes we could load multiple programs into memory, they code was still started at X address and always stayed in a contained space that was easily identifiable. Which means we still could see the code, read it and finally figure out "how things worked". This is also, in my opinion, when the age of "buffer overruns" exploits took off. I'll explain more in the next era.

*This is where I left "the scene" and my direct first hand knowledge ends. From here on I am writing about my understanding of the scene as it has evolved from watching the next generation do more than I had ever dreamed.*

Then came along Windows 2000. And things started to change in "end users (us everyday folks)" Operating Systems. They figured out how to start protecting memory (or so they had thought). They would introduce things called Rings. And that would help protect the core OS from the programs that were running. But it wasn't fool proof. Not by a long shot. Buffer overruns, the simple trick of injecting more data into an offset address from the programs known starting point and allowing code to be run that the original programmer did not intend. While buffer overruns have been around since the beginning days of computers it was in 2001, in my opinion, where it became the de facto way to take over and exploit all code and OSes going forward. The old days of reading all the machine code to figure things out had simply become passe.

ASLR took birth that same year. But how do you keep an OS stable when the OS, the drivers and the users code were constantly being loaded into random locations? By 2005 they had it worked out enough that the 2.6 Linux Kernel was introduced with it. OSX Leopard, Windows Vista, iOS 4.3 and Android 4.0 would be the next major releases that introduced the "Random Layout" of the "Address Space".

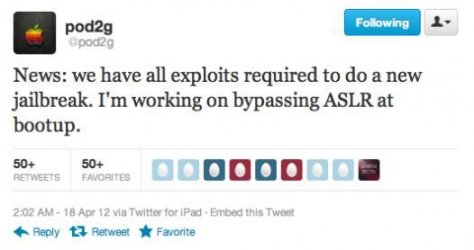

So how does ASLR help protect from buffer overruns? The hacker can no longer predict where to inject his code to be successfully run. Because the ASLR keeps moving things around. Does this mean ASLR stops all buffer overruns? No. Does it make it harder? Oh yes. Today the hackers are looking for ways to simply by-pass ASLR. But it takes time and lots of hot pockets (watch "The Core" to get the reference) to find those types of exploits. And each new version of an OS usually means having to start over in identifying the holes.

*Anyone with more direct knowledge, please feel free to chime in and correct my explanation. Watching it from the outside doesn't always provide the best insight.*